[OpenClaw] The OpenClaw Problem: When Personal AI Agents Meet Corporate Data

Personal AI agents like OpenClaw are increasingly touching corporate data. Understand the data governance risks and how enterprises should respond.

![[OpenClaw] The OpenClaw Problem: When Personal AI Agents Meet Corporate Data](https://storage.ghost.io/c/40/ac/40ac18d7-d0cb-4132-9e8b-e6740dedd4ad/content/images/size/w1200/2026/02/Anyreach-Openclaw-Blog-Cover--4--12.png)

The Data Boundary Has Dissolved

For decades, enterprise security has been built around the concept of a data boundary — a perimeter within which corporate data lives, protected by layers of security controls. Cloud adoption stretched this boundary. Remote work stretched it further. Personal AI agents have dissolved it entirely.

When an employee connects OpenClaw to their work email, corporate calendar, Slack workspace, or shared documents, corporate data begins flowing to and through personal infrastructure with no organizational controls. The agent processes the data, caches it, reasons about it, and stores conversation history — all on hardware that IT has never seen, on a network IT does not control, using software that IT has not approved.

What Data Is at Risk

The scope of data exposure depends on what the employee connects to their personal agent, but common patterns include email content containing customer information, contract details, strategic discussions, and financial data. Calendar data reveals organizational priorities, client relationships, and deal timelines. Messaging content from Slack, Teams, or other platforms often contains the most candid and sensitive internal communications. Shared documents including reports, spreadsheets, and presentations may contain proprietary data, customer lists, and competitive intelligence.

Critically, the employee may not even realize the scope of data their agent is processing. An agent configured to help manage email does not distinguish between a routine meeting invite and an email containing a client's financial information. It processes everything with the same access the employee has.

The Governance Gap

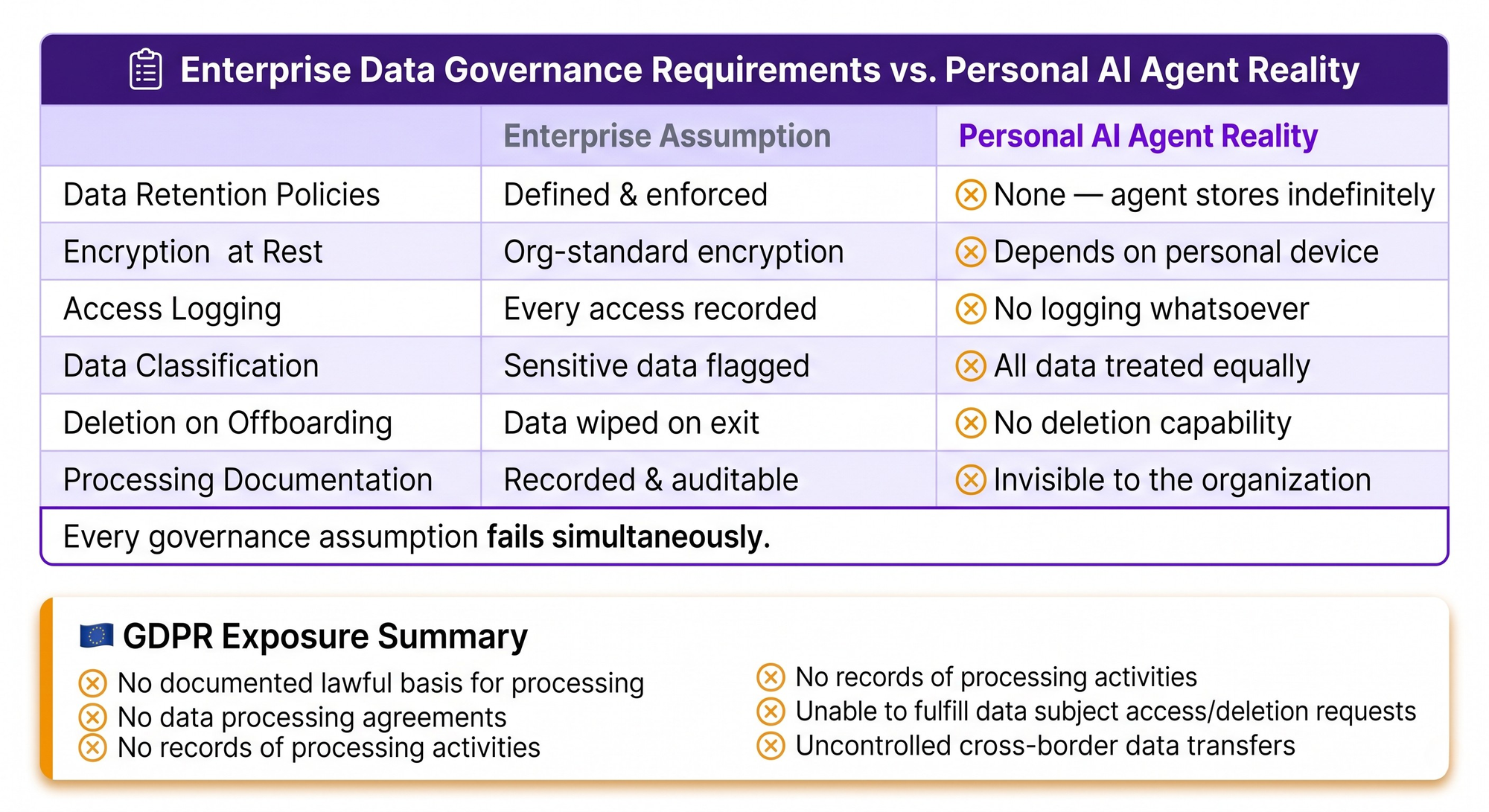

Enterprise data governance frameworks assume that data processing occurs on managed systems with documented controls. When data flows to personal AI agents, every assumption fails. There are no retention policies governing how long the agent stores data. There are no encryption standards for data at rest on the personal device. There is no access logging showing what data was accessed and when. There is no classification enforcement ensuring sensitive data receives appropriate handling. There is no deletion capability if the employee leaves the organization.

For organizations subject to data protection regulations like GDPR, the situation is particularly acute. GDPR requires documented lawful bases for data processing, data processing agreements with third parties, records of processing activities, and the ability to honor data subject access and deletion requests. None of these requirements can be met when data is processed by personal AI agents outside organizational control.

Closing the Gap

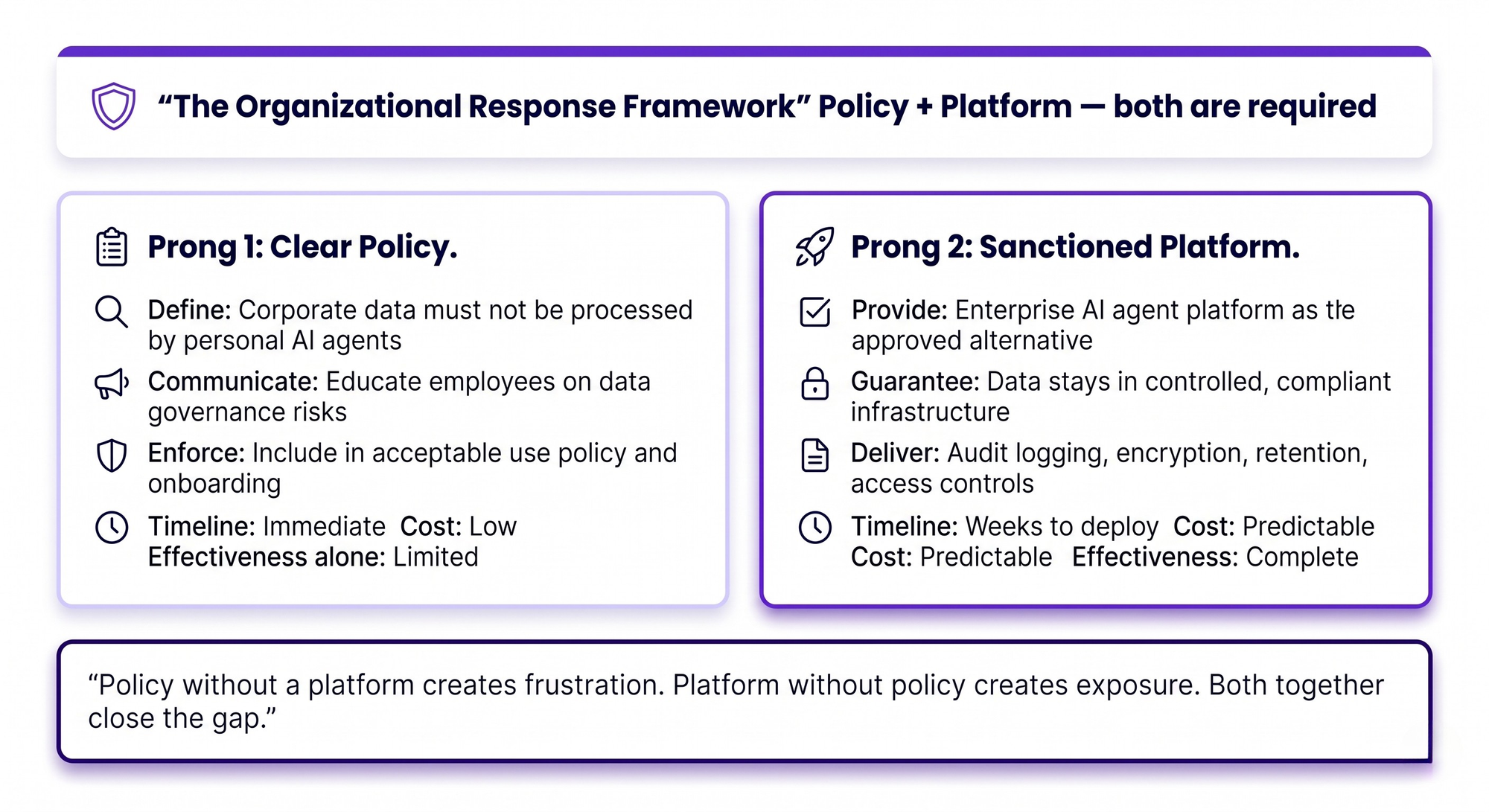

Organizations need a two-pronged approach. First, implement clear policies specifying that corporate data must not be processed by personal AI agents, and communicate the risks to employees. Second, and more importantly, provide a sanctioned alternative that satisfies the same needs.

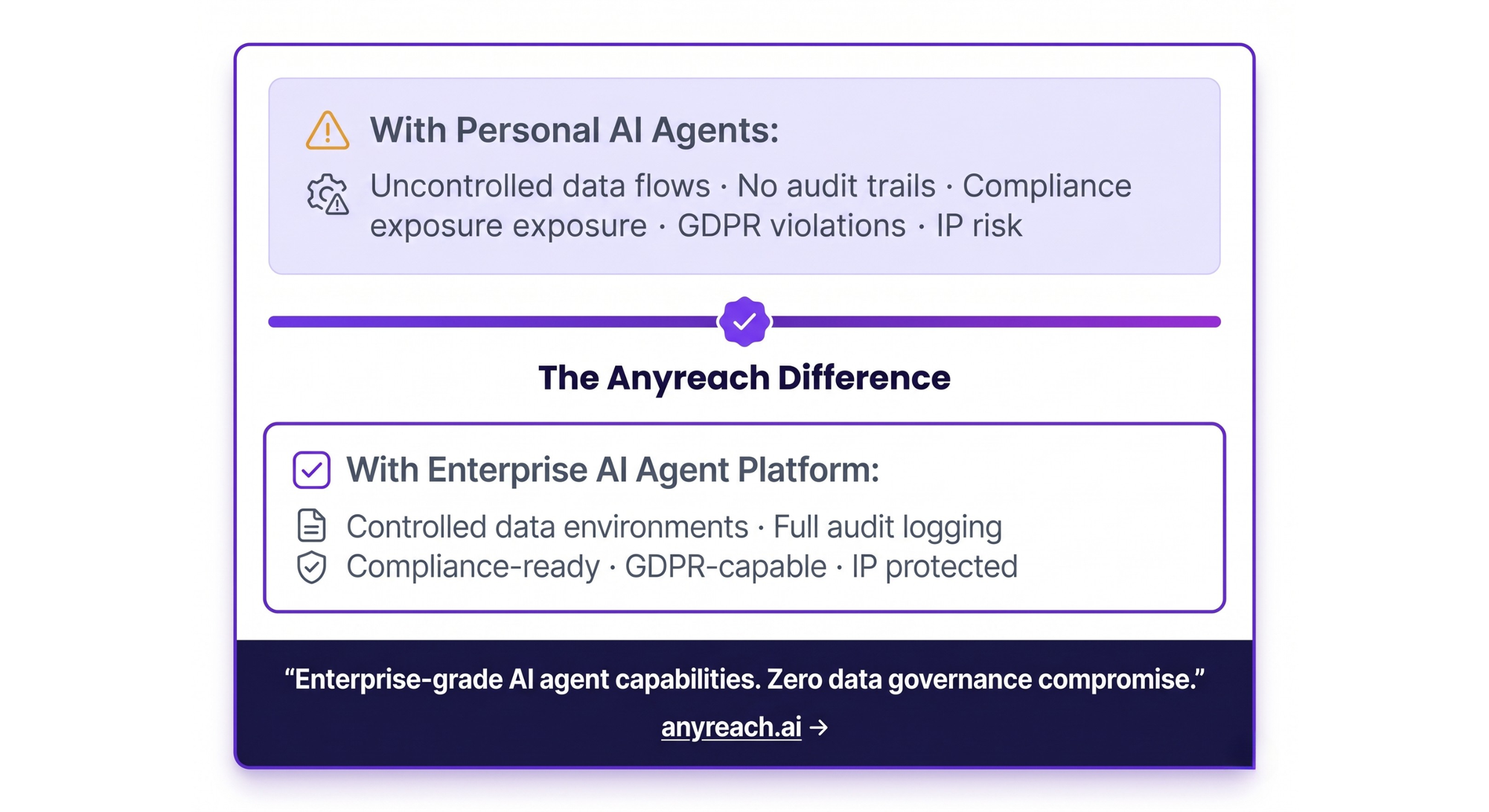

Enterprise AI agent platforms like Anyreach process corporate data within managed, compliant infrastructure where every data governance requirement can be met. Data remains within controlled environments with appropriate encryption, access controls, audit logging, and retention policies. The AI agent capabilities employees want are delivered without the data governance risks of personal agents.

The organizations that move quickly on both fronts — clear policy plus compelling alternative — will minimize their exposure while capturing the productivity benefits of AI agents.

Frequently Asked Questions

What happens when personal AI agents access corporate data?

Corporate data flows to unmanaged personal infrastructure without audit trails, encryption standards, retention policies, or access controls. The data is processed, cached, and stored on hardware outside organizational control, creating data governance violations and potential compliance exposure.

Can data governance policies prevent personal AI agent usage?

Policies alone are insufficient because personal AI agent usage is nearly impossible to detect through technical means. Effective governance requires both clear policies and a sanctioned enterprise AI agent platform that provides a compliant alternative.

What is the GDPR risk of personal AI agents?

Personal AI agents processing corporate data create GDPR violations including undocumented processing activities, missing data processing agreements, inability to honor data subject rights, and uncontrolled cross-border data transfers.

Ready for Enterprise-Grade AI Agents?

Anyreach delivers the agentic AI capabilities your organization needs with the security, compliance, and scalability enterprise operations demand. See how Anyreach can transform your customer and employee experience with AI agents that actually do things.

![[BPO Insights] A Customer Taught Me Our Pricing Model Was Upside Down — And It Changed How We Sell](https://storage.ghost.io/c/40/ac/40ac18d7-d0cb-4132-9e8b-e6740dedd4ad/content/images/size/w600/2026/02/04_the_builders_log-25.png)

![[BPO Insights] The Automation Curve: At What Call Volume Does AI Beat Humans on Cost-Per-Resolution?](https://storage.ghost.io/c/40/ac/40ac18d7-d0cb-4132-9e8b-e6740dedd4ad/content/images/size/w600/2026/02/03_the_uncomfortable_math-25.png)

![[BPO Insights] "Just Show Me It Works on One Client" — Why the Minimum Viable Proof Point Closes More BPO Deals Than Any Pitch Deck](https://storage.ghost.io/c/40/ac/40ac18d7-d0cb-4132-9e8b-e6740dedd4ad/content/images/size/w600/2026/02/02_from_the_other_side-25.png)

![[BPO Insights] CCaaS Platforms Are Adding Native AI — And BPOs That Depend on Them Are About to Become Redundant](https://storage.ghost.io/c/40/ac/40ac18d7-d0cb-4132-9e8b-e6740dedd4ad/content/images/size/w600/2026/02/01_cx_intelligence_drop-26.png)